What Claude Mythos & Project Glasswing mean for Product Builders

Claude Mythos just dropped and it scared Anthropic. Project Glasswing forms. What does it mean for Product Builders?

Last week, Anthropic dropped Claude Mythos Preview, a model that’s exceptionally strong at finding and exploiting security vulnerabilities.

It’s so capable that they didn’t release it to the public.

Instead, they launched Project Glasswing, a coordinated effort to secure the world’s most critical software before models like this become widely available.

The idea is: secure the world’s most important software before bad actors get their hands on these models.

And here’s the scary part: the hacker-like capabilities of these models are only going to get better. Here’s what the Anthropic statement had to say:

“We see no reason to think that Mythos Preview is where language models’ cybersecurity capabilities will plateau.”

This could be the Y2K of our generation

Y2K was a known problem. It was visible, measurable, and had a deadline. Companies mobilized, fixed the issue, and moved on.

This is something else entirely.

Mythos has already surfaced thousands of vulnerabilities, most of which Anthropic can’t even disclose yet.

And other open-source models are likely only months behind.

This will be a race to secure the world’s most critical software, everything from banking to transportation to logistics.

Models are powerful & yet…

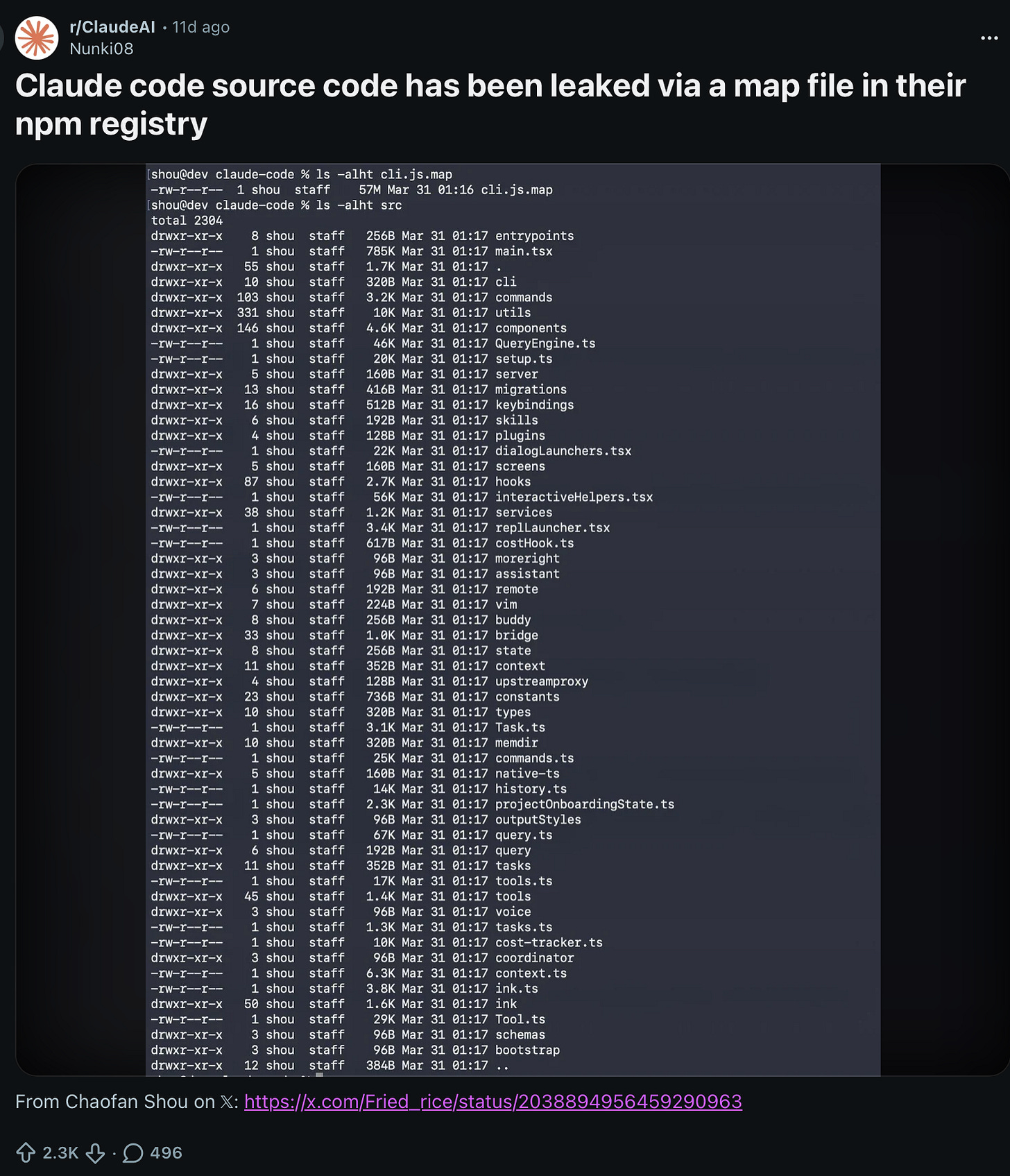

On the one hand, Anthropic is leading the taskforce to secure the world’s software, but on the other hand, the entire Claude Code source code leaked a few weeks ago.

Another hilarious paradox: while models continue to astound researchers in areas of coding and cybersecurity, viral videos of Sam Altman reacting to ChatGPT hallucinations are hilarious.

And because there’s an influx of amateur software developers, even more code leaks and basic threats will be prevalent. Here’s a perfect example:

We’re entering an era where these models are incredibly powerful, capable of breaking through hardened systems, but they will also produce brittle or vulnerable code, hallucinate, and enable us to make simple security mistakes.

We’re entering the AI paradox era.

Agents will be powerful enough to exploit software vulnerabilities that no one has cracked for 20 years, and yet they’ll also lead us to make silly mistakes by hallucinating or a coding agent leaking a code repository or API keys.

New jobs we couldn’t have predicted

Remember the promise that AI will create jobs no one could’ve imagined? So far, I’m seeing some evidence that could be true.

Last week, I wrote about agentic credit card start-ups: a new product category for agents that we couldn’t have predicted just a short 2 years ago.

Now as part of Project Glasswing, Anthropic is actively hiring for roles that barely existed a few years ago: threat investigators, offensive security researchers, policy specialists, and security engineers focused specifically on AI security.

As models get better at probing systems, the attack surface expands. And as the attack surface expands, the demand for people who can defend it grows just as quickly.

In many domains, like coding and security, AI is increasing the complexity of the problem space, which creates new categories of work.

“Ship fast, fix later” is breaking

For the last 20 years, software has operated on a simple assumption:

Ship fast

Fix bugs later

Patch vulnerabilities when needed

This worked because finding bugs was hard, exploiting them was harder, and attackers were resource-constrained.

But a model like Claude Mythos will just brute-force through a vulnerability.

There will be no “we’ll fix this later” mindset. And this has real implications for how products get built.

Historically, Product Managers have have made conscious trade-offs in early versions of a product. You might have accepted low-probability security risks to launch earlier, or add an additional feature.

Now, PMs will need to recalibrate what trade-offs they make for an MVP.

In some cases, the safest decision will likely be to ship something simpler, with fewer features, but a smaller attack surface.

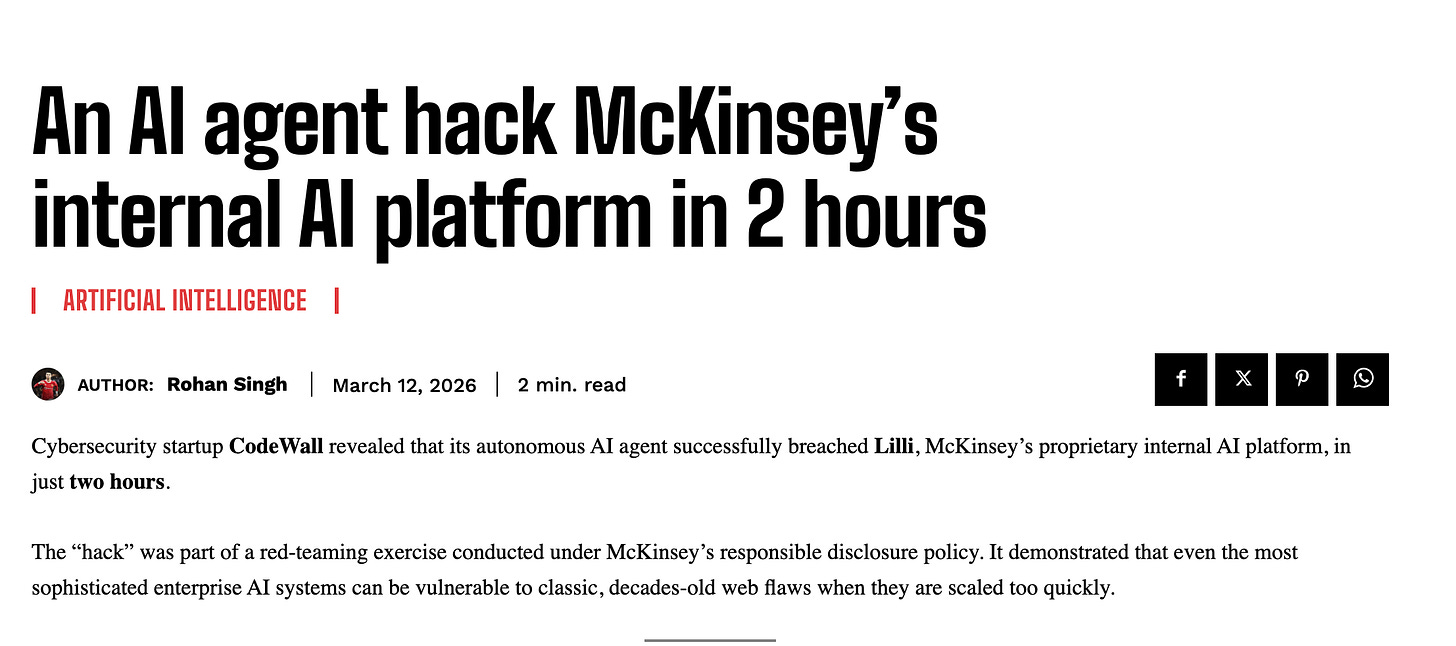

CodeWall’s demo hack of McKinsey’s internal AI platform in just 2 hours is a perfect case-in-point. Hacking will become easier than ever. Product Builders will be forced to slow down development to address vulnerabilities.

The hidden cost of AI productivity

AI is making it dramatically faster to write code. That part is obvious.

What’s less obvious is that the time saved may not translate into faster shipping. Instead, in a post-Mythos world the speed gains may be swallowed by additional testing, validation, and security requirements.

So while AI will increase development velocity, it will also increase the amount of work required to secure what’s being built.

The net effect will probably be a rebalancing of where time gets spent, instead of faster development cycles.

Final thoughts

For years, the product mantra was simple: move fast and break things.

That worked in a world where breaking things was recoverable, where issues could be patched before they were widely exploited.

Now, security will be the foundation of products. And with models like Mythos only growing in capability, that foundation will need to be a lot stronger than it used to be.